Compressive Transformer vs LSTM. a summary of the long term memory… | by Ahmed Hashesh | Embedded House | Medium

![R] Infinite Memory Transformer: Attending to Arbitrarily Long Contexts Without Increasing Computation Burden : r/MachineLearning R] Infinite Memory Transformer: Attending to Arbitrarily Long Contexts Without Increasing Computation Burden : r/MachineLearning](https://external-preview.redd.it/PUHTcbxlDKI49rjD2OQYAvwo6lUyytX-6Z25BiUiZRg.jpg?width=640&crop=smart&auto=webp&s=2b02611b40865d78119f7bebc76c3e6734a72575)

R] Infinite Memory Transformer: Attending to Arbitrarily Long Contexts Without Increasing Computation Burden : r/MachineLearning

Why are LSTMs struggling to matchup with Transformers? | by Harshith Nadendla | Analytics Vidhya | Medium

Transformer Memory Requirements – Trenton Bricken – Interested in Machine Learning, Neuroscience, and Original Glazed Krispy Kreme Doughnuts.

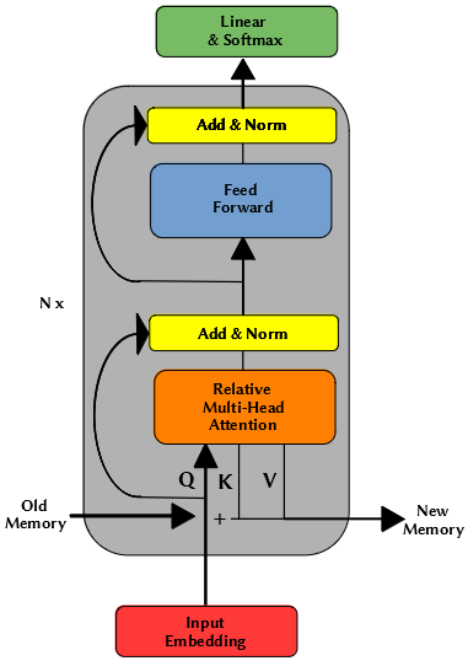

![PDF] Streaming Transformer-based Acoustic Models Using Self-attention with Augmented Memory | Semantic Scholar PDF] Streaming Transformer-based Acoustic Models Using Self-attention with Augmented Memory | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/768e5f9b019c27babbfaf817a5bb20316b9df113/2-Figure1-1.png)

PDF] Streaming Transformer-based Acoustic Models Using Self-attention with Augmented Memory | Semantic Scholar

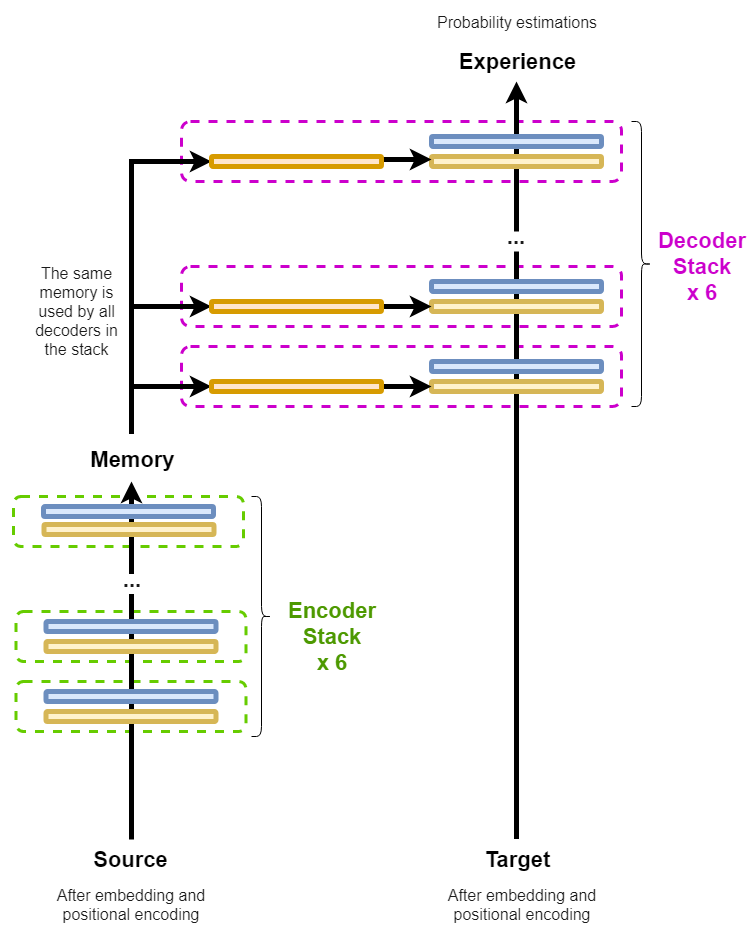

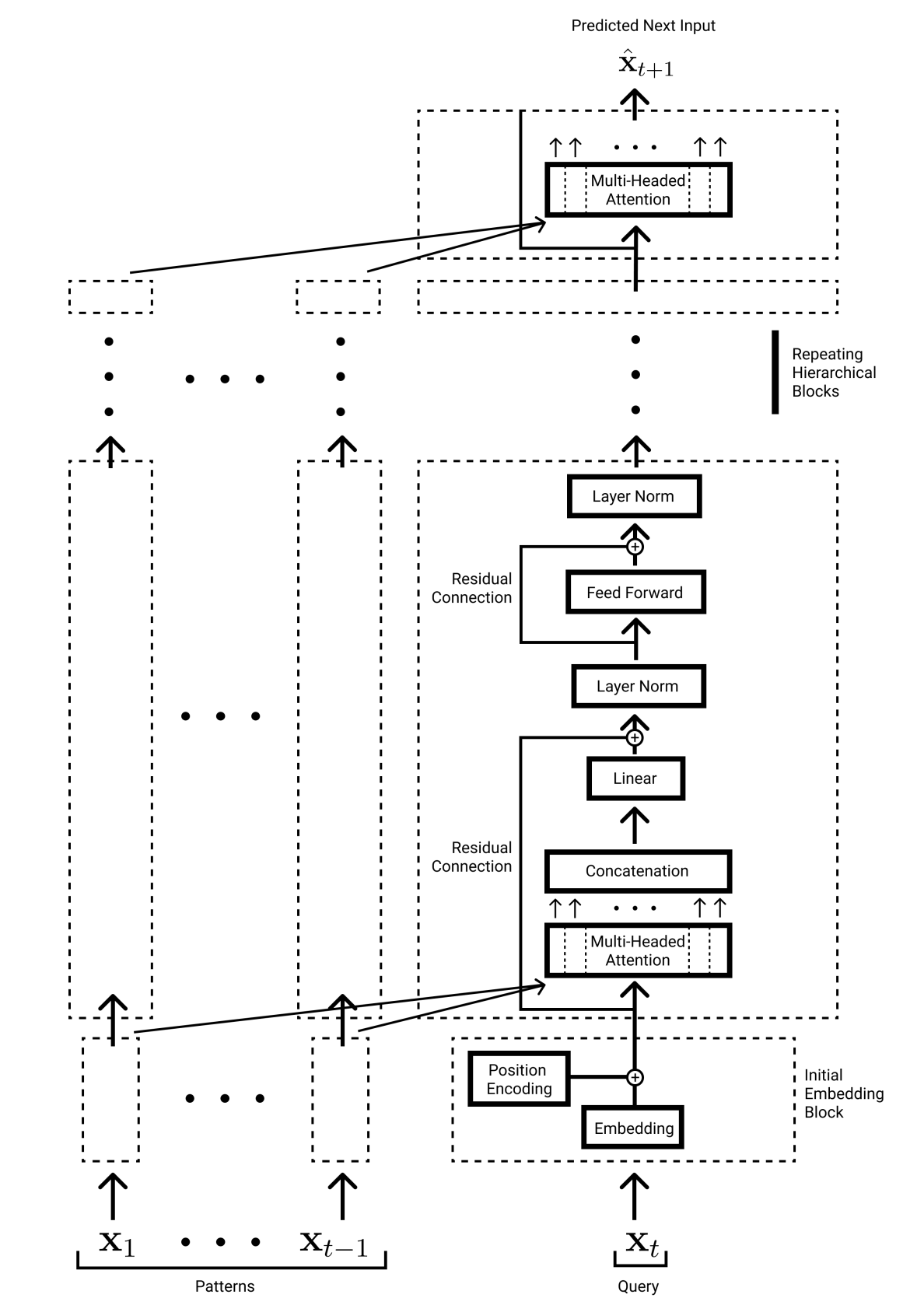

Finetuning a 1B vanilla Transformer model to use external memory of... | Download Scientific Diagram

∞-former: Infinite Memory Transformer (aka Infty-Former / Infinity-Former, Research Paper Explained) - YouTube

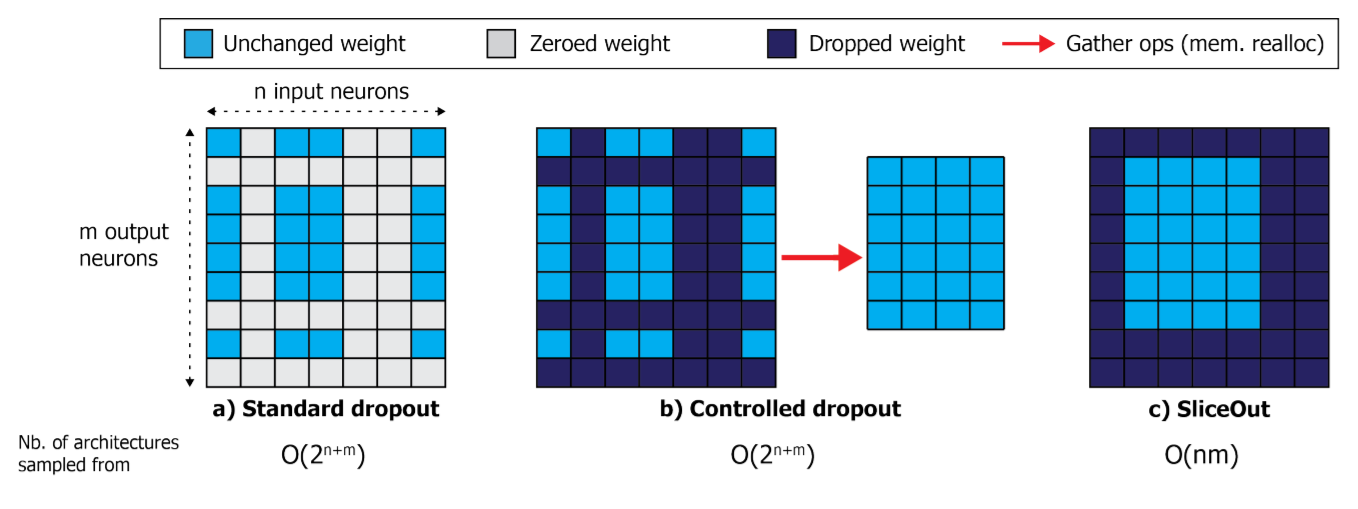

SliceOut paper, 10-40% speedups and memory reduction with Wide ResNets, EfficientNets, and Transformer - Deep Learning - fast.ai Course Forums